Error. It’s a subject no physician (or researcher, or analyst) wants to think about, especially when it comes to their ownpractice. And yet errors still occur. Research is still irreproducible; clinical tests still show false positives and false negatives; results still sometimes make no sense at all. Why? In the medical laboratory, at least, the problems may not be integral to the test itself – rather, they may arise from the way a sample was treated before it ever underwent testing: the preanalytical phase.

Pre-analytical Error in Pathology

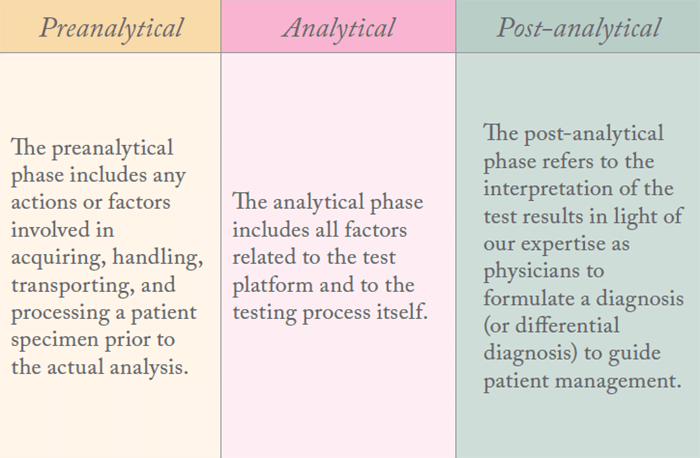

As a pathologist, I perform analytical tests on patient specimens to make diagnoses. The testing process is often separated into three familiar phases: preanalytical, analytical, and post-analytical (also known as the interpretative or consultative phase). We strive for precision and validity in all of our analyses so that the data we generate reflects the true biological state of the patient. It has been estimated that data from the pathology laboratory comprises as much as 80 percent of the objective, quantitative disease information that exists in a patient’s medical record – and much of this data directly guides patient management. This leaves little room for error. Flawed results mean flawed medical decision-making. In short, an incorrect answer from even a single test can have serious consequences for a patient. Some preanalytical errors – specimen mislabeling, for example – are clerical; others are related to factors that compromise the quality of the specimen and may reduce or even destroy its suitability for certain types of testing. In other words, a particular test could be highly specific and sensitive, but would yield a spurious result if the analytes in the specimen of interest were artifactually altered or corrupted. For example, one research group has shown that a delay in time to stabilization (also known as “cold ischemia time”) can artifactually render a HER2-positive breast cancer specimen negative on Herceptest® analysis (1)(2)(3). When the result of a companion diagnostic test such as Herceptest® functions as a gateway to targeted therapy, artifactually induced false negative test results could incorrectly rule out treatment with a potentially life-saving drug – a devastating consequence.

Quality begets quality

In this era of “precision medicine,” diagnosis, prognosis, prediction, and treatment are often based on the molecular characteristics of the patient and on the molecular features of the disease. These characteristics are typically determined directly from the analysis of representative biospecimens – which means that, if we want to generate high-quality molecular analysis data, we need high-quality specimens. In fact, the increased power of modern molecular analysis technologies has raised the bar for the molecular quality of patient specimens; the better our testing methods get, the better our sampling methods must be to keep up. No matter how dazzling new analytical technologies may be, the “garbage in, garbage out” paradigm still applies to the data they produce. No technology can spin straw into gold! Preanalytical issues are central to specimen integrity and molecular quality. The myriad steps involved in acquisition, handling, processing, transportation, and storage can have profound effects on both the composition and quality of different molecular species in patient biospecimens. Safeguarding their molecular integrity in the preanalytical period is an immediate challenge; it can’t be delayed or disregarded. Once compromised, a specimen’s molecular quality cannot be retrieved. The molecular quality of a specimen at the time of fixation, when its biological activity is stopped, determines its fitness for testing. After that, if the specimen is well-preserved and carefully stored, its quality may remain essentially unchanged; otherwise, it will only further diminish as the specimen degrades over time. Therefore, preanalytical factors that directly impact a specimen’s molecular integrity can have an adverse effect on both real-time patient management and future decisions based on reanalysis of the same specimen. Additionally, if the patient enters a clinical trial and their specimens are used for correlative scientific studies or discovery research, the downstream consequences of bad data and irreproducible study results can be profound. We are just beginning to appreciate the fact that a huge amount – more than half, in fact (4) – of published biomedical data cannot be reproduced. No one has yet looked closely at the degree to which poor or unknown patient specimen quality may contribute to this problem. I suspect that, when we do, it will be significant.A matter of standards

Why are there currently no established or enforced standards around preanalytics? It’s a difficult question – with a complicated, multifactorial answer. First, I see a lack of awareness and a need for education about preanalytics throughout the medical community. Pathologists, surgeons, and every other professional who is part of the specimen chain of custody (radiologists, pathology assistants, nurses, phlebotomists, medical technologists and much more) need to be educated about preanalytics. It’s vital that they all understand the role they play as links in an unbreakable quality chain. Second, there is a dearth of biospecimen science data upon which to build evidence-based procedures for preanalytics that affect precision medicine. This kind of information is focused on the specimen itself and how it is affected by different preanalytical factors, alone or in combination. It’s the data that everyone wants – but no one wants to pay for! We need much more biospecimen science to fully understand the impact of different preanalytical factors on different biomolecular specimens of different sample types. Furthermore, specific analytical platforms may have different requirements for analyte molecular quality – something else that I fear may often be overlooked. These data are foundational for precision medicine, and yet, at the moment, they are sadly lacking. Third, old practice habits are hard to break. Legacy systems in medical institutions may be difficult to redesign to accommodate changes in preanalytical workflows. By and large, we are still handling patient specimens the same way we have for decades, with no sign of change on the way. In addition, patient specimen preanalytics cross many professional domains, and there are no cross-cutting standards to assure that key preanalytical steps are controlled and documented in an end-to-end fashion. Fourth, there is no specific reimbursement for the professional time, expertise and effort required to address preanalytics in real time – as they should be. This issue must be addressed to assure compliance with preanalytical standards across the board. People typically do what they are paid to do, even if they don’t fully understand the scientific reasons behind the mandates. Fifth and finally, there are still many who discount the importance of preanalytics, which I find very hard to comprehend. Worse still, they may discount the importance of specimen quality or reject the premise of “garbage in, garbage out” altogether! There are those who believe that, through the wonders of technology and data science, data quantity can overcome the challenges of poor data quality. In my opinion, this kind of thinking is unrealistic and unacceptable – even potentially dangerous – at the level of the individual patient. I would argue that it is misplaced at the population data level as well. If precision is truly the goal, there is no conceivable situation in which preanalytical variation is truly unimportant and can be confidently disregarded – and thinking so can only lead to disaster.Sources of error

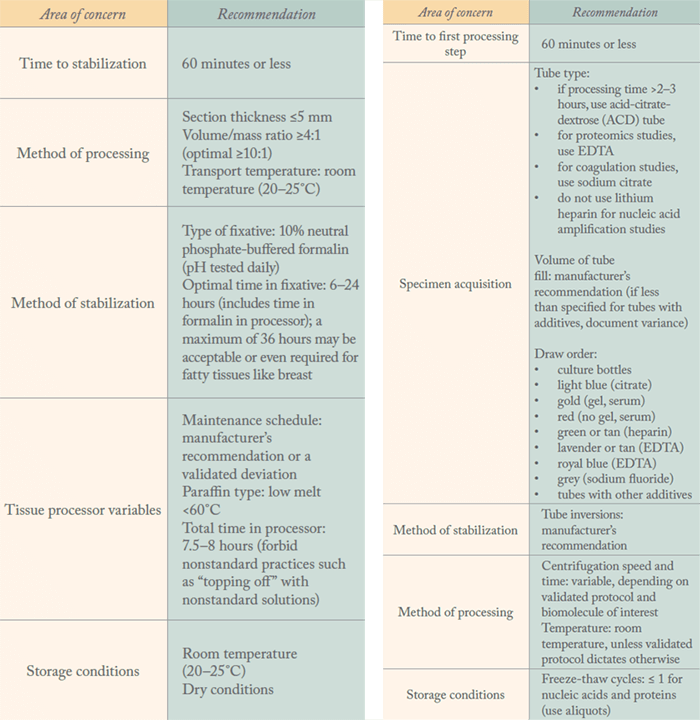

In a December 2014 think tank sponsored by the National Biomarker Development Alliance (NBDA), my private and public sector colleagues and I established a ““Top 10” list of key contributors to preanalytical error – the top five for tissue specimens and the top five for blood samples. For tissues, the top five sources of error are:- Cold ischemia time

- Method of processing (section thickness, temperature, fixative volume to tissue mass ratio)

- Type and quality of fixative

- Total time in formalin

- Storage conditions

- Time to processing

- Method of draw (draw order, tube type, tube fill volume)

- Method of stabilization (tube inversions)

- Method of processing (centrifugation speed, centrifugation time, temperature)

- Storage conditions

Small changes, big returns

Based on the independent review the PPMPT has conducted over the past two years of the scientific literature related to tissue and blood preanalytics, the team has made five recommendations for each sample type.

At the moment, quality assurance is close to completely absent from the preanalytical phase. Now that we’ve set out some recommendations and guidelines, our next step is to implement our generalized, five-point action plan to ameliorate preanalytical variability (see “Time to Act”). It’s our hope that, by making recommendations and devising ways to achieve them, we can begin the process of establishing a quality assurance ecosystem.

A better biomarker

The future of medicine depends on the development of molecular biomarkers. They can provide more precise diagnosis and patient stratification, detect early disease, elucidate risk of disease, predict disease outcome, response to therapy, and therapeutic toxicities and permit monitoring of therapeutic management. Unfortunately despite its importance, biomarker development has historically been fraught with failure. The majority of biomedical discovery research has proven irreproducible or invalid, and very few qualified biomarkers have been produced in the last decade. Failures in biomarker science have translated into failed clinical trials and, ultimately, the inability of biomedicine to deliver on the emerging promise of precision medicine. Rigorous adherence to standards that are consistent, and consistently applied across the development process, is required to achieve the reproducibility we currently lack. Of primary importance, therefore, is the quality of the starting materials – the biospecimens used for analysis. Development of complex biomarker approaches represents an even higher bar. Preanalytical artifacts may abrogate any ability to define biological effects of interest or distinguish biological signatures of importance in patient samples. This problem is especially consequential when the biomarker assay is a companion diagnostic and the gateway to access to a therapy. Neither a false positive nor a false negative biomarker test is tolerable in that circumstance. Regulatory approval of new biomarker assays is now also focused on specimen quality as it relates to the quality of the data on which approvals are based. The biomarker qualification programs of the US Food and Drug Association and the European Medicines Agency emphasize the need to document the biospecimen quality of diagnostic biomarkers used for either drug or device (assay) development. It is imperative that the entire biomedical community addresses the need for standardized processes and fit-for-purpose biospecimens to accelerate the delivery of accurate, reproducible, clinically relevant molecular diagnostics for precision medicine.A recipe for failure

The NBDA, a part of the Complex Adaptive Systems Institute at Arizona State University, for which I serve as Chief Medical Officer, has intensively studied the process by which biomarkers are currently developed and has identified the root causes of most biomarker development and validation failure. The most significant among these include the following issues:- Discoveries often start with irrelevant clinical questions – that is, questions that may be biologically interesting, but are not useful in clinical practice.

- Biomarker discoveries are often based on “convenience samples” – biospecimens of unknown or poor quality.

- Rigorous, end-to-end, appropriately powered statistical design is often lacking.

- Technology standards are either lacking or disregarded if they exist.

- Data and metadata quality and provenance are often inadequate to poor.

- Analysis and analytics are often inappropriate or inadequate for the sophistication of the clinical question and/or design.

Lessons learned

The amount of clinically meaningful and biologically significant data that we can generate from biospecimens has increased by orders of magnitude in recent years. As our analytical methods and technologies have evolved, however, quality assurance concerns have been focused primarily on how we test specimens – with little or no attention paid to the specimens themselves. Ultimately, no matter how sophisticated and technologically advanced our analytical platforms, the quality of the data can never be higher than the quality of the starting materials – the analytes.It is now possible to generate petabytes of bad data from bad specimens – and we can do it with unprecedented speed. The stakes are higher than ever. But regardless of how much effort is involved and how far we have to go to ensure full quality control, we need to remember that it’s all worth it for one reason: our patients. They are counting on us. Carolyn Compton is a Professor of Life Sciences, Arizona State University, and Adjunct Professor of Pathology, Johns Hopkins Medical Institutions, USA.

The five objectives of our generalized action plan to ameliorate preanalytical variability are:

- Verify the “Top 10” preanalytics from the published literature and translate these into practice metrics – and then, of course, publish our findings.

- Propose accreditation checklist questions to CAP’s Laboratory Accreditation Program with the goal of enforcing the Top 10 through the College’s laboratory accreditation process.

- Educate pathologists about the Top 10 list, its scientific basis, and the practice metrics that need to be met to control and record them.

- Educate other professional groups – such as surgeons, nurses, pathology assistants and other healthcare professionals – about patient specimen preanalytics. Assist them, individually as needed, in developing their own practice guidelines to assure specimen quality and in helping to orchestrate overall concordance among practice guidelines throughout the biospecimen chain of custody, from patient to analysis.

- Seek financial support from payors and professional support from regulators and funders to implement and sustain the practices that control – and the infrastructure to document – patient specimen molecular quality for precision medicine and translational research.

References

- T Khoury et al., “Delay to formalin fixation effect on breast biomarkers”, Mod Pathol, 22, 1457–1467 (2009). PMID: 19734848. DG Hicks, S Kulkarni, “Trastuzumab as adjuvant therapy for early breast cancer: the importance of accurate human epidermal growth factor receptor 2 testing”, Arch Pathol Lab Med, 132, 1008–1015 (2008). PMID: 18517261. T Khoury, “Delay to formalin fixation (cold ischemia time) effect on breast cancer molecules”, Am J Clin Pathol, [Epub ahead of print] (2018). PMID: 29471352. M Baker, “1,500 scientists lift the lid on reproducibility”, Nature, 533, 452–454 (2016). PMID: 27225100. AC Wolff et al., “Recommendations for human epidermal growth factor receptor 2 testing in breast cancer: American Society of Clinical Oncology/College of American Pathologists clinical practice guideline update”, Arch Pathol Lab Med, 138, 241–256 (2013). PMID: 24099077.