Measuring Your Brain Activity on Google Glass

Neuroergonomics, smartwear, spectroscopic brain imaging... Analytical science has never felt so futuristic

Neuroergonomics was defined by the late Raja Parasuraman as “the study of the human brain in relation to performance at work and in everyday settings” (1). But working against his vision is the fact that brain imaging studies tend to be restricted to artificial settings and simulations of actual tasks because of data collection limitations (for example, being tied to a large MRI machines). So when Hasan Ayaz (Drexel University) presented Raja Parasuraman and Ryan McKendrick (both of George Mason University) with the opportunity to ‘play’ with truly mobile neuroimaging, they were excited. And they came up with an interesting experiment: testing the performance benefits of ‘smart’ eyewear.

Participants were asked to walk round a college campus using Google Glass or a handheld device with Google Maps, while wearing a functional near-infrared spectroscopy (fNIRS) headband for brain activity analysis (2). We asked Ryan McKendrick and Hasan Ayaz to tell us what they discovered when they took their neuroimaging technique to the streets...

Could you tell us more about fNIRS?

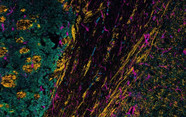

Hasan Ayaz: fNIRS is an emerging neuroimaging technique with a unique set of features that make it a good candidate for monitoring brain activity in natural settings. Specifically, it can measure localized brain activity in a similar way to functional magnetic resonance imaging (fMRI)’s blood oxygen level-dependent (BOLD) signal. When a particular brain area is activated, it requires more oxygen, and a surge of oxygen-rich blood is delivered immediately via neurovascular coupling. By tracking these tiny fluctuations, we can monitor activation of different areas, which is already proving useful in certain clinical and field applications.

Ryan McKendrick: fNIRS has low usage costs, and it allows for continuous data collection, so it works really well for longitudinal neuroimaging studies – and it is also incredibly quick to set up relative to other neuroimaging techniques. fNIRS is also very robust to motion, which is a huge benefit, considering our study took place while participants were constantly moving! Finally, because it is based on neurovascular coupling, we can leverage a lot of the basic research from fMRI in interpreting our data and developing theories.

Why did you focus on ‘smart’ eyewear?

HA: It gave us a unique opportunity to investigate how ambulatory systems, such as navigation aids, are used and how the brain engages during use in natural environments.

RM: Companies developing new technologies often focus on minimizing frustration for users, by maximizing intuitiveness and ease of use. These attributes are important and easy to test; however, if performance gains and integration with our neurobiology are not tested, we are missing a huge opportunity for societal advancement.

What did you discover?

RM: First, we found that a wearable display can give the user more cognitive reserves than a handheld device, so they can carry out other tasks more effectively. This may potentially lead to better awareness, integration and future projection of information from our surroundings. However, this benefit was diminished by improper display design. Google Glass simply imported its symbology from Google Maps, which may have been the simplest solution, but it doesn’t seem to have been the best. The issue was that Glass grabbed attention away from the environment, itself a well-known issue for heads-up and head-mounted displays. Had more attention been paid to designing the symbology for Glass around user behavior and brain activity, we believe Glass users could have blown away the phone users in our study.

What’s next?

HA: I will investigate new emerging applications of fNIRS from medical to field applications. In particular, neuroergonomics and how brain imaging could be used to improve product design and user interface is intriguing. I believe this approach can be useful for many complex systems.

RM: I see neuroergonomics as a robust science for vetting new technology. My future work aims to solidify neuroergonomics as an adoptable toolkit within academia and industry, and grow the field via integration with other techniques from machine-learning and applied cognitive architectures, as well as pharmacodynamics and kinetics. I’m also looking at creating better models of in situ brain dynamics.

- R Parasuraman et al., “Neuroergonomics: The brain in action and at work,” Neuroimage, vol. 59, 1-3, (2012).

- R McKendrick et al., “Into the wild: neuroergonomic differentiation of hand-held and augmented reality wearable displays during outdoor navigation with functional near infrared spectroscopy”, Front Hum Neurosci, 10, 216 (2016).

A former library manager and storyteller, I have wanted to write for magazines since I was six years old, when I used to make my own out of foolscap paper and sellotape and distribute them to my family. Since getting my MSc in Publishing, I’ve worked as a freelance writer and content creator for both digital and print, writing on subjects such as fashion, food, tourism, photography – and the history of Roman toilets. Now I can be found working on The Analytical Scientist, finding the ‘human angle’ to cutting-edge science stories.