For me, chromatography is always just a temporary solution. Separation of a sample is not the goal, but the means to answer an important question. For instance: does this pill contain enough of the active pharmaceutical ingredient? Is this food product safe? One day, we will no longer separate mixtures at all, but use a sensor to answer our questions. In fact, the question is no longer if, but rather when sensors will take over from chromatography and mass spectrometry. A massive number of articles have been published on sensors. Some sensors perform very well but, overall, the performance is inferior to that of chromatography. However, as chromatographers, we should not wait until we are overtaken by sensor scientists. We must use what they already have to improve our methods.

The operating principle of a sensor is based on molecular recognition and specific interactions, followed by a transducer to convert the interaction into a measurable signal. Very good progress has been made in molecular recognition, and the field of chromatography stands to benefit. Successful use of molecular imprinted polymers has so far eluded me, and immunoaffinity isolation has only limited applications.

However, while reading an article by Dang et al., I was struck by the enormous progress made in recognition using aptamers. Aptamers are single-stranded oligonucleotides or peptides that can bind with high specificity to target molecules, similar to antibody–antigen interactions. Selective aptamers have been developed for numerous applications, including bacterial toxins, veterinary drugs, and pesticide residues. We chromatographers should steal with pride from the concepts developed by sensor scientists. If we can apply aptamer routes for selective recognition into our sample preparation systems or maybe even into our chromatographic columns, we can combine the best of both worlds.

Hans-Gerd’s Landmark Paper N Dang et al., “Advances in aptasensors for the detection of food contaminants”, Analyst, 141, 3942–3961 (2016).

At RIC, research on sample preparation is one of our key activities. We are continuously evaluating new ideas (and re-evaluating old ones) to improve and automate this most important step in the analytical cycle. Many papers are published every year on sampling and sample preparation but, unfortunately, too many can never be applied in a routine environment. Often, performance is illustrated on outdated instrumental set-ups (for example, HPLC with UV detection or capillary GC with FID detection), at unrealistic high concentrations, in spiked samples. Today, sample preparation research should be performed on state-of-the-art instrumentation and at required sensitivities – which are often very low (sub-ppb!). Despite the many disappointments in the recent literature related to sample preparation, some significant achievements were announced in 2016. My chosen landmark paper attracted attention in the first instance by a well-chosen title: “Hard cap espresso machines in analytical chemistry: what else?” We are all very familiar with espresso coffee machines in our laboratories, but Sergio Armenta, Miguel de la Guardia and Francesc A Esteve-Turrillas from the University of Valencia, Spain, demonstrated that they can very quickly, efficiently and cost-effectively perform extractions of solid matrices other than coffee!

A slightly modified hard cap espresso machine with a working pressure of 19 bar was used in combination with liquid chromatography and fluorescence detection for the determination of polycyclic aromatic hydrocarbons (PAHs) in soil and sediment samples. The PAHs were extracted from 5.0 g of sample, previously homogenized, freeze-dried and sieved to 250 µm. The sample was homogenized with dispersing agent and introduced in a refillable stainless steel capsule. 50 mL of 40 percent acetonitrile in water is percolated through the sample at 72 ± 3 °C with a total extraction time of 11 s. The limit of detection for the PAHs is from 2 to 85 µg/kg and recoveries from spiked and aged samples ranged from 81 to 121 percent with relative standard deviations lower than 30 percent. Two PAH-containing certified reference materials – a clay soil and a sediment – were used for evaluation of the extraction efficiency and the trueness of the espresso method. Comparison of the results with the certified values indicated good agreement. Five real soil samples were analyzed by the developed procedure and also by an ultrasound extraction (USE) method using 100 mL acetonitrile and an extraction-sonication time of 30 min. The ∑PAHs ranged from 34 to 827 µg/kg, which tallies with other studies performed at urban and industrial areas. The hard cap espresso data and the USE data were statistically comparable. USE is a relatively cheap method, but time-consuming and less green than the espresso method. Other methods that can be successfully applied for the same application, such as supercritical fluid extraction, microwave-assisted extraction and pressurised solvent extraction/accelerated solvent extraction, suffer from the same cons as USE; moreover, they require expensive equipment.

The paper does have some important weaknesses. LC-fluorescence detection was selected for the determination of the individual PAHs, which is fine, but two pairs of PAHs are co-eluting, namely benz[a]anthracene-chrysene and dibenz[a,h]anthracene-benzo[ghi]perylene. Dedicated PAH columns are available from different companies on which the two pairs are completely separated. On the other hand, the LC-fluorescence chromatograms shown in Figure 4 of the paper are far from state-of-the-art PAH analysis, and it is surprising that a journal like Analytical Chemistry has accepted these chromatograms for publication! Despite this last remark, I would like to congratulate the authors for their ingenuity. The ‘espresso approach’ is unlikely to find its way into environmental laboratories dealing with hundreds of samples every week, but it could prove a valuable tool in R&D and for educational purposes. Pat’s Landmark Paper S Armenta et al., “Hard cap espresso machines in analytical chemistry: what else?”, Anal Chem, 88, 6570–6576 (2016).

For my landmark paper, I have selected a review on nuclear forensics published in Trends in Analytical Chemistry. The article highlights some of the unique challenges of nuclear and radioanalytical chemistry: the importance of mass spectrometry, the reliance on separations for the elimination of isobaric interferences, and the increasing but relatively recent adoption of approaches developed in the broader analytical community, such as chelation/extraction chromatography. Alongside these special considerations, radioanalytical chemists face the same challenges as the broader analytical community, such as demands for fast analysis, measurement robustness, sample preparation, sensitivity versus selectivity, matrix effects, and availability of standard reference materials. This paper highlights some of the interesting approaches that have evolved to address these issues (e.g., borate fusion as an alternative to hydrofluoric acid digestion). The paper caught my attention partly because I was involved in nuclear forensics projects shortly before moving to my current position in Ireland. The area is absolutely fascinating, for several reasons. First, many of the current analytical methodologies in nuclear and radioanalytical chemistry are based on technology that evolved during the Cold War. Second, researchers are problem-driven, and work in the broader analytical community is being adapted and adopted into the nuclear and radioanalytical chemistry areas. Third, my curiosity to explore completely new areas like this helps me refine my checklist of analytical questions that apply to other problems I might be working on. For instance, in the less familiar spectroscopies (alpha, beta, gamma) mentioned in the article, what is the specific information obtained and how are the emitted particles/radiation detected? How is signal resolution accomplished? Are there parallels to spectroscopies (fluorescence, mass spectrometry, and so on) that I am more familiar with? How does the radioanalytical community perform background correction strategies to compensate for matrix effects and quenching? Does common terminology such as isobaric interferences, background correction or quenching describe fundamentally different or related phenomena and are there differences in peak shape analysis in the two communities? What constitutes a standard reference material when the substance being measured is continually changing through radioactive decay?

The analytical chemistry community is broad and sometimes divided by a common language. But like an aging relative at a family gathering, by having conversations across divides and communities, we can all learn a lot. In short, papers like the one I have selected are able to promote dialogue and cross-pollination. Apryll’s Landmark Paper IW Croudace et al., “Recent contributions to the rapid screening of radionuclides in emergency responses and nuclear forensics”, Trends Anal Chem, 85, 120–129 (2016).

I often learn of earlier, highly important advances via references from recent publications. So it was when I backtracked from recent literature to a 2014 publication that reveals how it is possible to generate femtosecond photon pulses in the gamma-ray spectral region (1). First, it is appropriate to inquire why an analytical scientist would be interested in such pulses. The answer is that femtosecond photon pulses of GeV energy could be useful in nuclear resonance fluorescence (2), (3), radiographic imaging (4), and generally in the detection of many elements in the periodic table. How, then, are such pulses generated? Let us begin at the ‘business’ end of the system. First, let us assume we have a relativistic pulse of electrons (electrons traveling at nearly the speed of light), only a few femtoseconds long, and all traveling in exactly the same direction. A beam of laser light aimed at the electrons, perfectly opposite their direction of travel, will be scattered from the electrons (a process termed Thomson scattering [5]), and the scattered light will be Doppler-shifted by an amount related to the electrons’ velocity. In a preferred direction of scattering, back against the incident laser beam, the Doppler-shifted light will possess photon energies in the MeV to GeV (gamma-ray) region. Of course, the duration of the high-energy photon burst will depend on the length of the electron and incident-laser pulses. Further, its bandwidth (energy spread) will be a function of the energy range of the electron bunch and its degree of collimation. The relativistic electrons on which this process depends can originate from a suitable accelerator, but there are now better, more compact ways that yield femtosecond pulses directly. In particular, electrons generated within a plasma can ‘ride’ the wake of the electromagnetic field produced by a femtosecond laser pulse and will achieve relativistic velocities. Such a ‘laser plasma accelerator’ can be just a few centimeters in size and the electron-pulse length will depend only on the duration of the laser input (6).

Not surprisingly, for this whole sequence to proceed efficiently requires high laser power densities to generate the initial relativistic electrons and probably at least one extra stage of electron acceleration. And that brings me neatly back to the publication that initially caught my eye, which shows how it all appears possible (7). And why, you might ask, did such a paper appear in my inbox? Well, of course, it didn’t; rather, it was the result of what might be called ‘inquisitive browsing’, which is becoming increasingly uncommon and inconvenient in the modern era of focused online literature searching. My message for the “young ‘uns” is to take time occasionally to look at the tables of contents of journals outside or at least peripheral to one’s main field of interest. A corollary, of course, is to attend conference lectures, especially reviews, in other areas...

Gary’s Landmark Papers S Steinke et al., “Multistage coupling of independent laser-plasma accelerators”, Nature, 530, 190–193 (2016). S Rykovanov et al., “Quasi-monoenergetic femtosecond photon sources from Thomson Scattering using laser plasma accelerators and plasma channels”,J Phys B: At Mol Opt Phys, 47, 234013 (2014).

Ten years ago, the average analysis times in HPLC were in the range of 20–30 minutes, and the field was largely focused on developing faster chromatographic processes. Now, it is possible to routinely achieve HPLC separations within a few minutes or even less, while maintaining excellent quantitative performance: speed of HPLC is no longer the issue. The problem now facing the field is that our samples are increasingly complex, particularly in the –omics (lipidomics, proteomics or metabolomics). Therefore, there is a need to develop analytical strategies able to separate a huge number of compounds contained within a complex sample. For this purpose, comprehensive 2D-LC has emerged over the last few years as a promising (but more complex) alternative to very high-resolution 1D separation. Beyond separation techniques, high-resolution mass spectrometry (HRMS) becomes ever more popular and powerful, particularly for untargeted analysis of complex samples. Also in the field of mass spectrometry, there is increasing interest in ion mobility spectrometry (IMS), which can be added ahead of MS to further increase its resolving power. My selected paper describes the combination of 2D-chromatography and a powerful mass spectrometry approach (IMS-HRMS), which enables the separation of complex samples in four dimensions (two chromatographic, one mobility and one mass spectrometry). Obviously, there are many constraints when implementing such a complex analytical setup. There is a clear need to improve the software to manage the LC+LC-IMS/MS setup and – above all – the data treatment, especially because a 4D analytical setup has never before been implemented. Nevertheless, the authors successfully applied the strategy to characterize plant extracts and achieved impressive performance, with a peak capacity of 8,700 for an analysis time of two hours. In addition, for each peak observed on the 2D-LC chromatogram, the collision cross section and m/z values are available.

The paper is remarkable in that it combines the latest progress in both chromatography and mass spectrometry. It is difficult to find research groups with expertise in these disparate and complex analytical strategies – and trying to combine so-called LC+LC and IMS-HRMS is impressive. The paper proves that chromatography and mass spectrometry should always be combined rather than opposed. The limited resolving power of IMS-MS means that combination approaches like LC-IMS/MS or LC+LC-IMS/MS are certainly the best and most powerful strategy. In the future, I would like to see this approach evaluated for the characterization of –omics samples, as the number of detected features could certainly be strongly enhanced when using these multiple separation dimensions. Another application could be the analytical characterization of biopharmaceuticals, including monoclonal antibodies, antibody–drug conjugates, and bispecific antibodies. The addition of IMS to the more commonly used 2D-LC-MS setup would be of particular interest because it could help to differentiate isobaric compounds that are not chromatographically separated and also have the same m/z ratio. Davy’s Landmark Paper S Stephan et al., “A novel four-dimensional analytical approach for analysis of complex samples”, Anal Bioanal Chem, 408, 3751–3759 (2016).

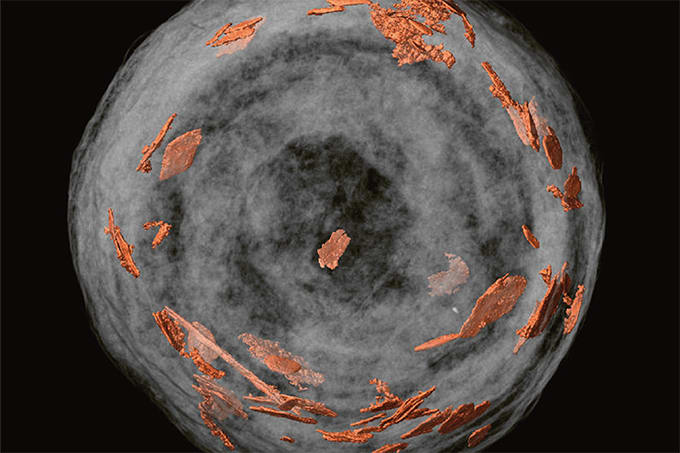

Transition metal dichalcogenides (TMDCs) are a family of novel materials with a hexagonal-layered lattice that is bonded to adjacent layers by weak van der Waals forces. TMDCs have generated significant scientific interest in areas ranging from photodetectors and solar cells to light-emitting devices and chemical sensors. Single-layer TMDCs give rise to strong photoluminescence, simultaneously emitted from up to three emissive excitons (a neutral exciton, an electron and hole bound together; a biexciton, two neutral excitons bound weakly; and a trion, two electrons and one hole). The spectroscopy and applications of these 2D TMDC platforms are very rich and their potential for optical/stand-off chemical sensing attracted my interest. In my landmark paper, the authors implement tip-enhanced photoluminescence (TEPL) microscopy in concert with tip-enhanced Raman scattering (TERS) spectroscopy to perform sub-diffraction limited mapping of single-layer MoS2 flakes at 20 nm spatial resolution. These small flakes are a few microns in size and are composed of a single MoS2 layer. Thus, all points on the flake should behave similarly. What Su et al. discovered is that there is substantial lateral heterogeneity in this system on a 20 nm scale that is not observable by a traditional confocal experiment. Further, the work function of the metallic tip (Ag, Au) had a profound impact on the exciton amplitudes and their relative distribution, and could be used to tune local excitonic processes within the 2D material. The potential of 2D TMDCs for optoelectronic device fabrication and heterostructure design is clearly predicated on the ability to make materials that are actually chemically and electronically homogeneous across the device’s operating length scale.

Frank’s Landmark Paper W Su et al., “Nanoscale mapping of excitonic processes in single-layer MoS2 using tip-enhanced photoluminescence microscopy”, Nanoscale, 8, 10564–10569 (2016).

References

- S Rykovanov et al., “Quasi-monoenergetic femtosecond photon sources from Thomson Scattering using laser plasma accelerators and plasma channels”, J Phys B: At Mol Opt Phys, 47, 234013 (2014). E Booth et al., “Nuclear resonance fluorescence from light and medium weight nuclei”, Nucl Phys, 57, 403–420 (1964). G Suliman et al., “Gamma beam industrial applications at ELI-NP”, In: Int J Mod Phys: Conference Series, 1660216, World Scientific: 2016. TL Fauber, Radiographic imaging and exposure, 5th edition, Elsevier Health Sciences: 2016. M Huang, GM Hieftje “Thomson scattering from an ICP”,Spectrochim. Acta B, 40, 1387–1400 (1985). CG Geddes et al., “Compact quasi-monoenergetic photon sources from laser-plasma accelerators for nuclear detection and characterization”, Nucl Instr Meth Phys Res B, 350, 116–121 (2015). S Steinke et al., “Multistage coupling of independent laser-plasma accelerators”, Nature, 530 190–193 (2016).