“Your Efficiency Challenge” is an exciting project from Agilent Technologies and The Analytical Scientist that helps you identify and address inefficiencies in your lab. Part I introduced the project – and kicked off a survey gathering views on efficiency in liquid chromatography from over 1,400 respondents. In Part II, we shared some of the results of the survey, and determined topics for a series of lively roundtable discussion webinars. Parts III–V bring together key points from the survey results and webinars, to help move the conversation forward.

In the first roundtable video, we sat down with pharma pioneer Kelly Zhang (Genentech) and LC expert Udo Huber (Agilent) to discuss efficiency at the analytical scale – and to discover how the right technology can help you push your results to the next level.

What does analytical efficiency mean to you?

Kelly Zhang: In the pharmaceutical industry, our primary goal is to get first- and best-in-class medicines to patients as fast as we can. To do that, we need the data to be not only fast, but also informative. If we acquire data quickly but then have to spend a long time analyzing it to get the information we need, the overall process is not efficient.

Udo Huber: To me, analytical efficiency is everything that helps you to get better quality data, so you can be sure that you see all the peaks and all the impurities in your sample. In turn, higher quality data gives you more confidence – and I think that’s very important, especially for the pharmaceutical industry.

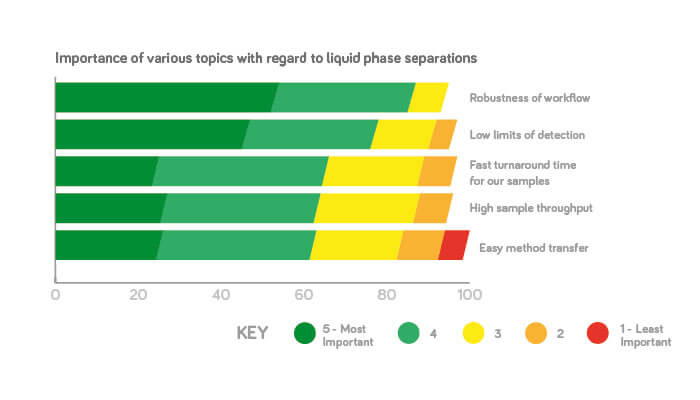

In our survey, the most important considerations in liquid phase separations were robustness of the entire workflow – 87 percent of people thought that was very important or important. Does that number surprise you?

Udo: I’m not surprised. Remember that robustness is more than the instrument and the chromatographic run – if you think about the whole workflow, there are so many points where something can go wrong. Nobody wants mistakes – and that’s true in all industries, not just pharma.

Kelly: I would also rate robustness number one. When it comes to patient safety, nothing can compromise the robustness; without a robust method, and technology generating quality data, we cannot make sure that our medicines are safe enough.

The second most important consideration was low limits of detection (78 percent) – but another part of the survey says that only 30 percent of users operate close to the limits of detection. Any comments?

Kelly: I think it depends on who you ask. My high throughput automation team, running hundreds of thousands of samples, are less concerned with sensitivity; they care more about how fast you can analyze one sample. But for the project team, sensitivity is a critical quality attribute – we don’t want to miss any impurities, especially if they are toxic.

Udo: It doesn’t surprise me that it shows up high on the list. For industries such as food safety or environmental analysis, the regulatory agencies are setting lower and lower limits, and customers can’t achieve that with the current instrumentation. I am surprised that separation performance or power wasn’t higher, however. What we hear from the pharmaceutical industry – as well as from other industries – is that customers are afraid they are missing compounds in their chromatograms.

How do we drive the analytical workflow to improve robustness?

Kelly: Simple: all methods must be validated. We need to follow the validation protocol, making sure we can achieve the same result day to day, instrument to instrument, site to site and operator to operator.

Udo: People also need to ensure they include basic system care in their standard operating procedures; it’s an occasionally forgotten element that helps keep your analysis or your system robust.

How can we improve robustness from a technology point of view?

Kelly: We always try to get the best instruments we can from the most reputable vendors; for example, we are now moving to UHPLC. We also try to reduce human error – so automation is another area where we can try to make the technology more robust.

Udo: I always recommend that you choose the system according to your application. For example, if you need to run a shallow gradient for your analysis, you should use a binary high-pressure mixing pump – because by design those pumps have a higher performance for shallow gradients. It may mean you have to invest in a binary or even a UHPLC pump. You could consider investing in a thermostat for your autosampler that keeps the temperature constant. Such simple investments really pay off after a relatively short period of time.

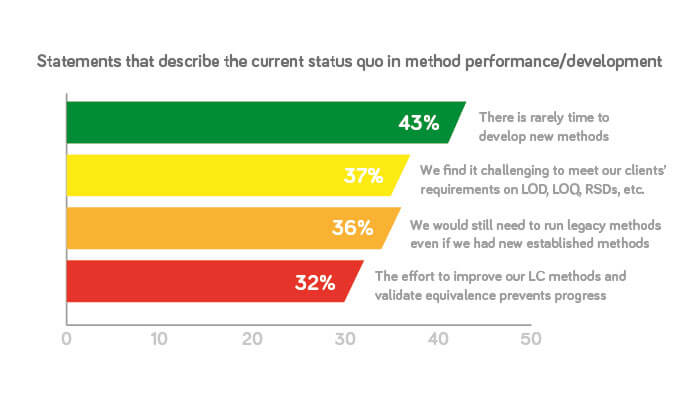

36 percent said they would run legacy methods even if newer established methods were better or faster, with 19 percent prevented from applying the latest methodology because of regulatory risks. How can scientists stay at the cutting edge?

Kelly: We think about this constantly. We always try to keep ourselves at the cutting edge, but it depends on the stage and the purpose of the technology. For example, we can apply a new technology within the research lab, but when it goes into a regulatory environment, it is not that straightforward. You have to make sure you do a full method evaluation. And there are always regulatory concerns. If you have an established method that works, not many people will be motivated to change it.

Udo: We always get this request from our customers and so we invest a lot of R&D resources into ensuring that our customers can run their legacy methods even on the new instruments. We understand that customers may be reluctant to move away from their legacy methods; however, it’s always possible to make small changes that improve and speed up the method.

Any final pearls of wisdom?

Udo: I think there is a way for everyone to improve analytical efficiency – but you really have to commit it! People sometimes don’t want to change, but without change there will be no efficiency gains.

Kelly: When we talk about overall analytical efficiency, we certainly need incremental optimization to make current processes more robust and faster – but we must also be on the lookout for game-changing technologies. For example, when we analyze a sample, we must normally use multiple methods – but what if there was a single technology that could provide us with all quality attributes at the same time? It would be a huge improvement in efficiency!

Are you a scientist, laboratory analyst or lab manager – or all of the above? Are you willing to challenge your perception of efficiency or do you already know you need to make efficiency gains?

Join our experts – Kelly Zhang, Udo Huber, Stéphane Dubant, Adrian Dunn, Wolfgang Kreiss, Martin Hermsen and Oliver Rodewyk – for an exclusive series of video webinar masterclasses:

- Webinar 1 – Analytical efficiency: How to push your results to the next level by selecting suitable technology

- Webinar 2 – Instrument efficiency: How to survive the sample onslaught and even create a little breathing room

- Webinar 3 – Laboratory efficiency: How to plan for success and secure your future

For more details, click here.